Formally, Boolean algebra is defined over a set of elements {0, 1}, two binary operators

{AND, OR}, and a single unary operator NOT. These operators are conventionally

represented as follows: · for

AND

+ for OR

’ for NOT, thus X’ is Not(X).

The Boolean operators are completely defined by Truth Tables.

AND 0·0 = 0 OR 0+0 = 0 NOT 0’ = 1

0·1 = 0 0+1 = 1 1’ = 0

1·0 = 0 1+0 = 1

1·1 = 1 1+1 = 1

Note that the use of “+” for the OR operation is restricted

to those cases in which addition

is not being discussed. When addition is

also important, we use different symbols for the

binary Boolean operators, the most common being Ù for AND, and Ú for

OR.

There is another notation for the complement (NOT) function

that is preferable. If X is a

Boolean variable, then ![]() is its

complement, so that

is its

complement, so that ![]() = 1 and

= 1 and ![]() = 0. The only reason that

= 0. The only reason that

this author uses X’ to denote ![]() is that the

former notation is easier to create in MS-Word.

is that the

former notation is easier to create in MS-Word.

There is another very handy function, called the XOR

(Exclusive OR) function. Although it

is not basic to Boolean algebra, it comes in quite handy in circuit

design. The symbol for the

Exclusive OR function is Å. Here is its complete definition using a truth

table.

0 Å 0 =

0 0 Å 1 =

1

1 Å 0 = 1 1 Å 1 = 0

Truth Tables

A truth table for a function of N Boolean variables depends

on the fact that there are only 2N

different combinations of the values of these N Boolean variables. For small values of N,

this allows one to list every possible value of the function.

Consider a Boolean function of two Boolean variables X and

Y. The only possibilities for

the values of the variables are:

X = 0 and Y = 0

X = 0 and Y = 1

X = 1 and Y = 0

X = 1 and Y = 1

Similarly,

there are eight possible combinations of the three variables X, Y, and Z,

beginning

with X = 0, Y = 0, Z = 0 and going through X = 1, Y = 1, Z = 1. Here they are.

X = 0, Y = 0, Z = 0 X = 0, Y = 0, Z = 1 X = 0, Y = 1, Z = 0 X =

0, Y = 1, Z = 1

X = 1, Y = 0, Z = 0 X = 1, Y = 0, Z = 1 X = 1, Y = 1, Z = 0 X =

1, Y = 1, Z = 1

As we shall see, we prefer truth tables for functions of not too many variables.

X |

Y |

F(X, Y) |

|

0 |

0 |

1 |

|

0 |

1 |

0 |

|

1 |

0 |

0 |

|

1 |

1 |

1 |

The figure at left is a truth table for a two-variable

function. Note that

we have four rows in the truth table, corresponding to the four possible

combinations of values for X and Y. Note

also the standard order in

which the values are written: 00, 01, 10, and 11. Other orders can be

used when needed (it is done below), but one must list all combinations.

|

X |

Y |

Z |

F1 |

F2 |

|

0 |

0 |

0 |

0 |

0 |

|

0 |

0 |

1 |

1 |

0 |

|

0 |

1 |

0 |

1 |

0 |

|

0 |

1 |

1 |

0 |

1 |

|

1 |

0 |

0 |

1 |

0 |

|

1 |

0 |

1 |

0 |

1 |

|

1 |

1 |

0 |

0 |

1 |

|

1 |

1 |

1 |

1 |

1 |

For another example of truth tables, we consider the figure

above, which shows two Boolean

functions of three Boolean variables.

Truth tables can be used to define more than one

function at a time, although they become hard to read if either the number of

variables

or the number of functions is too large.

Here we use the standard shorthand of F1 for

F1(X, Y, Z) and F2 for F2(X, Y, Z). Also

note the standard ordering of the

rows, beginning with 0 0 0 and ending with 1 1 1. This causes less confusion than

other ordering schemes, which may be used when there is a good reason for them.

As an example of a truth table in which non-standard

ordering might be useful, consider the

following table for two variables. As

expected, it has four rows.

|

X |

Y |

X · Y |

X + Y |

|

0 |

0 |

0 |

0 |

|

1 |

0 |

0 |

1 |

|

0 |

1 |

0 |

1 |

|

1 |

1 |

1 |

1 |

A truth table in this non-standard ordering would be used to

prove the standard Boolean axioms:

X · 0 = 0 for all X X + 0 = X for

all X

X · 1 = X for all X X + 1 = 1 for all X

In future lectures we shall use

truth tables to specify functions without needing to consider

their algebraic representations. Because

2N is such a fast growing function, we give truth

tables for functions of 2, 3, and 4 variables only, with 4, 8, and 16 rows,

respectively. Truth

tables for 4 variables, having 16 rows, are almost too big. For five or more variables, truth

tables become unusable, having 32 or more rows.

Labeling Rows in Truth Tables

We now discuss a notation that is commonly used to identify

rows in truth tables. The exact

identity of the rows is given by the values for each of the variables, but we

find it convenient

to label the rows with the integer equivalent of the binary values. We noted above that for N

variables, the truth table has 2N rows. These are conventionally numbered from 0

through

2N – 1 inclusive to give us a handy way to reference the rows. Thus a two variable truth table

would have four rows numbered 0, 1, 2, and 3.

Here is a truth-table with labeled rows.

|

Row |

A |

B |

G(A, B) |

|

0 |

0 |

0 |

0 |

|

1 |

0 |

1 |

1 |

|

2 |

1 |

0 |

1 |

|

3 |

1 |

1 |

0 |

We can see that G(A, B) = A Å B, but

0 = 0·2 + 0·1 this value has nothing to do with the

1 = 0·2 + 1·1 row numberings, which are just the

2 = 1·2 + 0·1 decimal equivalents of the values in

3 = 1·2 + 1·1 the A & B columns as binary.

A three variable truth table would have eight rows, numbered

0, 1, 2, 3, 4, 5, 6, and 7.

Here is a three variable truth table for a function F(X, Y, Z) with the rows

numbered.

|

Row Number |

X |

Y |

Z |

F(X, Y, Z) |

|

0 |

0 |

0 |

0 |

1 |

|

1 |

0 |

0 |

1 |

1 |

|

2 |

0 |

1 |

0 |

0 |

|

3 |

0 |

1 |

1 |

1 |

|

4 |

1 |

0 |

0 |

1 |

|

5 |

1 |

0 |

1 |

0 |

|

6 |

1 |

1 |

0 |

1 |

|

7 |

1 |

1 |

1 |

1 |

Note that the row numbers correspond to the

decimal value of the three bit binary, thus

0 = 0·4 + 0·2 + 0·1

1 = 0·4 + 0·2 + 1·1

2 = 0·4 + 1·2 + 0·1

3 = 0·4 + 1·2 + 1·1

4 = 1·4 + 0·2 + 0·1

5 = 1·4 + 0·2 + 1·1

6 = 1·4 + 1·2 + 0·1

7 = 1·4 + 1·2 + 1·1

Truth tables are purely Boolean tables in which decimal

numbers, such as the row numbers

above do not really play a part.

However, we find that the ability to label a row with a

decimal number to be very convenient and so we use this. The row numberings can be quite

important for the standard algebraic forms used in representing Boolean

functions.

Question: Where to Put the Ones and Zeroes

Every truth table corresponds to a Boolean expression. For some truth tables, we begin with

a Boolean expression and evaluate that expression in order to find where to

place the 0’s and

1’s. For other tables, we just place a

bunch of 0’s and 1’s and then ask what Boolean

expression we have created. The truth

table just above was devised by selecting an

interesting pattern of 0’s and 1’s. The

author of these notes had no particular pattern in

mind when creating it. Other truth

tables are more deliberately generated.

Let’s consider the construction of a truth table for the Boolean expression.

F(X, Y, Z) = ![]()

Let’s evaluate

this function for all eight possible values of X, Y, Z.

X = 0 Y = 0 Z = 0 F(X, Y, Z) = 0·0 + 0·0 + 0·1·0 = 0

+ 0 + 0 = 0

X = 0 Y = 0 Z = 1 F(X, Y, Z) = 0·0 + 0·1 + 0·1·1 = 0

+ 0 + 0 = 0

X = 0 Y = 1 Z = 0 F(X, Y, Z) = 0·1 + 1·0 + 0·0·0 = 0

+ 0 + 0 = 0

X = 0 Y = 1 Z = 1 F(X, Y, Z) = 0·1 + 1·1 + 0·0·1 = 0

+ 1 + 0 = 1

X = 1 Y = 0 Z = 0 F(X, Y, Z) = 1·0 + 0·0 + 1·1·0 = 0

+ 0 + 0 = 0

X = 1 Y = 0 Z = 1 F(X, Y, Z) = 1·0 + 0·1 + 1·1·1 = 0

+ 0 + 1 = 1

X = 1 Y = 1 Z = 0 F(X, Y, Z) = 1·1 + 1·0 + 1·0·0 = 1

+ 0 + 0 = 1

X = 1 Y = 1 Z = 1 F(X, Y, Z) = 1·1 + 1·1 + 1·0·1 = 1

+ 1 + 0 = 1

From the above, we create the truth table for the function. Here it is.

|

X |

Y |

Z |

F(X, Y, Z) |

|

0 |

0 |

0 |

0 |

|

0 |

0 |

1 |

0 |

|

0 |

1 |

0 |

0 |

|

0 |

1 |

1 |

1 |

|

1 |

0 |

0 |

0 |

|

1 |

0 |

1 |

1 |

|

1 |

1 |

0 |

1 |

|

1 |

1 |

1 |

1 |

A bit later we shall study how to derive Boolean expressions

from a truth table. Truth tables

used as examples for this part of the course do not appear to be associated

with a specific

Boolean function. Often the truth tables

are associated with well-known functions, but the

point is to derive that function starting only with 0’s and 1’s.

Consider the truth table given below, with no explanation of

the method used to generate the

values of F1 and F2 for each row.

|

Row |

X |

Y |

Z |

F1 |

F2 |

|

0 |

0 |

0 |

0 |

0 |

0 |

|

1 |

0 |

0 |

1 |

1 |

0 |

|

2 |

0 |

1 |

0 |

1 |

0 |

|

3 |

0 |

1 |

1 |

0 |

1 |

|

4 |

1 |

0 |

0 |

1 |

0 |

|

5 |

1 |

0 |

1 |

0 |

1 |

|

6 |

1 |

1 |

0 |

0 |

1 |

|

7 |

1 |

1 |

1 |

1 |

1 |

Figure: Our Sample

Functions F1 and F2

Students occasionally ask how the author knew where to place

the 0’s and 1’s in the above

table. There are two answers to this,

both equally valid. We reiterate the

statement that a

Boolean function is completely specified by its truth table. Thus, one can just make an

arbitrary list of 2N 0’s and 1’s and then decide what function of N

Boolean variables has

been represented. In that view, the

function F2 is that function specified by the sequence

(0, 0, 0, 1, 0, 1, 1, 1) and nothing more.

We can use methods described below to assign it a

functional representation. Note that F2

is 1 if and only if two of X, Y, and Z are 1.

Given

this, we can give a functional description of the function as F2 = X·Y + X·Z + Y·Z.

As the student might suspect, neither the pattern of 0’s and

1’s for F1 nor that for F2 were

arbitrarily selected. The real answer is

that the instructor derived the truth table from a set of

known Boolean expressions, one for F1 and one for F2. The student is invited to compute

the value of F2 = X·Y

+ X·Z

+ Y·Z

for all possible values of X, Y, and Z; this will verify

the numbers as shown in the truth table.

We have noted that a truth table of two variables has four

rows (numbered 0, 1, 2, and 3)

and that a truth table of three variables has eight rows (numbered 0 through

7). We now

prove that a truth table of N variables has 2N rows, numbered 0

through 2N – 1. Here is an

inductive proof, beginning with the case of one variable.

1. Base case: a function of one variable X

requires 2 rows,

one row for X = 0 and one row

for X = 1.

2. If a function of N Boolean variables X1,

X2, …., XN requires 2N rows, then

the function of (N + 1)

variables X1, X2, …., XN, XN+1

would require

2N rows for X1, X2, …., XN when XN+1 = 0

2N rows for X1, X2, …., XN when XN+1 = 1

3. 2N +2N = 2N+1, so the function of (N + 1) variables required 2N+1 rows.

While we are at it, we show that the

number of Boolean functions of N Boolean variables is

2R where R = 2N, thus the number is ![]() . The argument

is quite simple. We have shown

. The argument

is quite simple. We have shown

that the number of rows in a truth table is given by R = 2N. The value in the first row could

be a 0 or 1; thus two choices. Each of

the R = 2N rows could have two choices, thus the total

number of functions is 2R where R = 2N.

For N = 1, R = 2, and 22 = 4. A truth table for the function F(X) would

have two rows, one

for X = 0 and one for X = 1. There are

four functions of a single Boolean variable.

F1(X) = 0, F2(X)

= 1, F3(X) = X, and F4(X) = ![]() .

.

It might be interesting to give a table of the number of

rows in a truth table and number of

possible Boolean functions for N variables.

The number of rows grows quickly, but the

number of functions grows at an astonishing rate.

|

N |

R = 2N |

2R |

|

1 |

2 |

4 |

|

2 |

4 |

16 |

|

3 |

8 |

256 |

|

4 |

16 |

65 536 |

|

5 |

32 |

4 294 967 296 |

|

6 |

64 |

264 » 1.845·1019 |

Note on computation: log

2 = 0.30103, so 264 = (100.30103)64 = 1019.266

.

log

1.845 = 0.266, so 100.266 » 1.845 and 1019.266

»

1.845·1019

The number of Boolean functions of N Boolean variables is

somewhat of interest. More to

interest in this course is the number of rows in any possible truth-table

representation of a

function of N Boolean variables. For N =

2, 3, and 4, we have 2N = 4, 8, and 16 respectively,

so that truth tables for 2, 3, and 4 variables are manageable. Truth tables for five variables

are a bit unwieldy and truth tables for more than five variables are almost

useless.

Truth Tables and

Associated Tables with Don’t Care Conditions

At this point, we mention a

convention normally used for writing large truth tables and

associated tables in which there is significant structure. This is called the “don’t care”

condition, denoted by a “d” in the table.

When that notation appears, it indicates that the

value of the Boolean variable for that slot can be either 0 or 1, but give the

same effect.

Let’s look at two tables, each

of which to be seen and discussed later in this textbook. We

begin with a table that is used to describe control of memory; it has

descriptive text.

|

Select |

|

Action |

|

0 |

0 |

Memory not active |

|

0 |

1 |

Memory not active |

|

1 |

0 |

CPU writes to memory |

|

1 |

1 |

CPU reads from memory |

The two control variables are

Select and ![]() . But note that

when Select = 0, the action of

. But note that

when Select = 0, the action of

the memory is totally independent of the value of ![]() . For this

reason, we may write:

. For this

reason, we may write:

|

Select |

|

Action |

|

0 |

d |

Memory not active |

|

1 |

0 |

CPU writes to memory |

|

1 |

1 |

CPU reads from memory |

Consider the truth table for a 2–to–4

active–high decoder that is enabled high.

The

complete version is shown first; it has 8 rows and describes 4 outputs:Y0,

Y1, Y2, and Y3.

|

Enable |

X1 |

X0 |

Y0 |

Y1 |

Y2 |

Y3 |

|

0 |

0 |

0 |

0 |

0 |

0 |

0 |

|

0 |

0 |

1 |

0 |

0 |

0 |

0 |

|

0 |

1 |

0 |

0 |

0 |

0 |

0 |

|

0 |

1 |

1 |

0 |

0 |

0 |

0 |

|

1 |

0 |

0 |

1 |

0 |

0 |

0 |

|

1 |

0 |

1 |

0 |

1 |

0 |

0 |

|

1 |

1 |

0 |

0 |

0 |

1 |

0 |

|

1 |

1 |

1 |

0 |

0 |

0 |

1 |

The more common description uses the “don’t care” notation.

|

Enable |

X1 |

X0 |

Y0 |

Y1 |

Y2 |

Y3 |

|

0 |

d |

d |

0 |

0 |

0 |

0 |

|

1 |

0 |

0 |

1 |

0 |

0 |

0 |

|

1 |

0 |

1 |

0 |

1 |

0 |

0 |

|

1 |

1 |

0 |

0 |

0 |

1 |

0 |

|

1 |

1 |

1 |

0 |

0 |

0 |

1 |

This latter form is simpler to

read. The student should not make the

mistake of considering

the “d” as an algebraic value. What the

first row says is that if Enable = 0, then I don’t care

what X1 and X0 are; even if they have different values,

all outputs are 0.

The next section will discuss conversion of a truth table

into a Boolean expression. The

safest way to do this is to convert a table with “don’t cares” back to the full

representation.

Evaluation of Boolean Expressions

Here is another topic that this

instructor normally forgets to mention, as it is so natural to

one who has been in the “business” for many years. The question to be addressed now is:

“What are the rules for evaluating Boolean expressions?”

Operator Precedence

The main question to be

addressed is the relative precedence of the basic Boolean operators:

AND, OR, and NOT. These rules are based

on the algebraic model, which does not use the

XOR function; its precedence is not defined.

The relative precedence in any programming

language is specified by that language.

The relative precedence of the

operators is:

1) NOT do this first

2) AND

3) OR do this last

Consider the Boolean expression

A·B

+ C·D,

often written as AB + CD. Without the

precedence rules, there are two valid interpretations: either (A·B) +

(C·D)

or A·(B + C)·D.

The precedence rules for the operators indicate that the first is the correct

interpretation; in

this Boolean algebra follows standard algebra as taught in high-school. Consider now the

expression ![]() ; according to our rules, this is read as

; according to our rules, this is read as ![]() .

.

Note that parentheses and

explicit extension of the NOT overbar can override

the precedence

rules, so that A·(B + C)·D is

read as the logical AND of three terms: A, (B + C), and D.

Note also that the two expressions ![]() and

and ![]() are

different. The first expression, better

are

different. The first expression, better

written as ![]() , refers to the logical NOT of the logical AND of A

and B; in a language

, refers to the logical NOT of the logical AND of A

and B; in a language

such as LISP it would be written as NOT (AND A B). The second expression, due to the

precedence rules, refers to the logical AND of the logical NOT of A and the

logical NOT

of B; in LISP this might be written as AND( (NOT A)

(NOT B) ).

Evaluation of Boolean

expressions implies giving values to the variables and following the

precedence rules in applying the logical operators. Let A = 1, B = 0, C = 1, and D = 1.

A·B + C·D = 1·0 + 1·1 = 0 + 1 = 1

A·(B + C)·D = 1·(0 +

1)·1

= 1 ·

1 ·

1 = 1

![]() =

= ![]() = 0 · 0 +

1 ·

0 = 0 + 0 = 0

= 0 · 0 +

1 ·

0 = 0 + 0 = 0

![]() =

= ![]() =

= ![]() = 1

= 1

![]() =

= ![]() = 0 · 1 =

0

= 0 · 1 =

0

Also

A·(B + C·D) = 1·(0 + 1·1) = 1 · (0 + 1) = 1 · 1 = 1

(A·B + C)·D =

(1·0

+ 1)·1

= (0 + 1) ·

1 = 1

The Basic Axioms and Postulates of Boolean Algebra

We close our

discussion of Boolean algebra by giving a formal definition of the algebra

along with a listing of its basic

axioms and postulates.

Definition: A Boolean algebra is a closed algebraic

system containing a set K of two or more

elements and three operators, two binary and one unary. The binary operators are denoted

“+” for OR and “·”

for AND. The unary operator is NOT,

denoted by a overbar placed

over

the variable. The system is closed,

so that for all X and Y in K, we have X + Y in K, X · Y

in K and NOT(X) in K. The set K must

contain two constants 0 and 1 (FALSE and TRUE),

which obey the postulates below. In

normal use, we say K = {0, 1} – set notation.

The postulates of Boolean algebra are:

P1 Existence of 0 and 1 a)

X + 0 = X for all X

b)

X ·

1 = X for all X

P2 Commutativity a) X + Y = Y + X for all X and Y

b)

X ·

Y = Y ·

X for all X and Y

P3 Associativity a) X + (Y + Z) = (X + Y) + Z, for all X, Y, and Z

b)

X ·

(Y ·

Z) = (X ·

Y) ·

Z, for all X, Y, and Z

P4 Distributivity a) X · (Y + Z) = (X · Y) +

(X ·

Z), for all X, Y, Z

b)

X + (Y ·

Z) = (X + Y) ·

(X + Z), for all X, Y, Z

P5 Complement a) X + ![]() = 1, for all X

= 1, for all X

b)

X ·

![]() = 0, for all X

= 0, for all X

The theorems of Boolean algebra are:

T1 Idempotency a) X + X = X, for all X

b)

X ·

X = X, for all X

T2 1 and 0 Properties a) X + 1 = 1, for all X

b)

X ·

0 = 0, for all X

T3 Absorption a) X + (X · Y) = X, for all X and Y

b) X · (X + Y) = X, for all X and Y

T4 Absorption a) X + ![]() ·Y = X + Y, for all X and Y

·Y = X + Y, for all X and Y

b)

X ·

(![]() + Y) = X · Y,

for all X and Y

+ Y) = X · Y,

for all X and Y

T5 DeMorgan’s Laws a) ![]() =

= ![]() ·

· ![]()

b)

![]() =

= ![]() +

+ ![]()

T6 Consensus a)![]()

b)![]()

The observant student will note that most of the postulates,

excepting only P4b, seem to be

quite reasonable and based on experience in high-school algebra. Some of the theorems

seem reasonable or at least not sufficiently different from high school algebra

to cause

concern, but others such as T1 and T2 are decidedly different. Most of the unsettling

differences have to do with the properties of the Boolean OR function, which is

quite

different from standard addition, although commonly denoted with the same

symbol.

The principle of duality is another property that is

unique to Boolean algebra – there is

nothing like it in standard algebra.

This principle says that if a statement is true in Boolean

algebra, so is its dual. The dual of a

statement is obtained by changing ANDs to ORs, ORs

to ANDs, 0s to 1s, and 1s to 0s. In the

above, we can arrange the postulates as duals.

Postulate Dual

0·X = 0 1 + X = 1

1·X = X 0 + X = X

0 + X = X 1·X = X These

last two statements just repeat

1 + X = 1 0·X = 0 the

first two if one reads correctly.

Both standard algebra and Boolean algebra have distributive

postulates. Standard algebra

has one distributive postulate; Boolean algebra must have two distributive

postulates as a

result of the principle of duality.

In standard algebra, we have the equality A·(B +

C) = A·B

+ A·C

for all values of A, B, and

C. This is the distributive postulate as

seen in high school; we know it and expect it.

In Boolean algebra we have the distributive postulate A·(B

+ C) = A·B

+ A·C,

which looks

familiar. The principle of duality

states that if A·(B + C) = A·B + A·C is

true then the dual

statement A + B·C

= (A + B)·(A

+ C) must also be true. We prove the

second statement

using a method unique to Boolean algebra.

This method depends on the fact that there are

only two possible values for A: A = 0 and A = 1. We consider both cases using a proof

technique much favored by this instructor: consider both possibilities for one

variable.

If A = 1, the statement becomes 1 + B·C = (1 + B)·(1 + C), or 1 = 1·1, obviously true.

If A = 0, the statement becomes 0 + B·C = (0 + B)·(0 + C), or B·C = B·C.

Just for fun, we offer a truth-table proof of the second distributive postulate.

|

A |

B |

C |

B·C |

A + B·C |

|

(A + B) |

(A + C) |

(A + B)·(A + C) |

|

0 |

0 |

0 |

0 |

0 |

|

0 |

0 |

0 |

|

0 |

0 |

1 |

0 |

0 |

|

0 |

1 |

0 |

|

0 |

1 |

0 |

0 |

0 |

|

1 |

0 |

0 |

|

0 |

1 |

1 |

1 |

1 |

|

1 |

1 |

1 |

|

1 |

0 |

0 |

0 |

1 |

|

1 |

1 |

1 |

|

1 |

0 |

1 |

0 |

1 |

|

1 |

1 |

1 |

|

1 |

1 |

0 |

0 |

1 |

|

1 |

1 |

1 |

|

1 |

1 |

1 |

1 |

1 |

|

1 |

1 |

1 |

Figure: A + B·C =

(A + B)·(A + C)

Note that to complete the proof, one must construct the

truth table, showing columns for

each of the two functions A + B·C and (A + B)·(A + C), then note that the contents of the

two columns are identical for each row.

A Few More Proofs

At this point in the course, we

note two facts:

1) That

some of the theorems above look a bit strange.

2) That

we need more work on proof of Boolean theorems.

Towards that end, let’s look at a few variants of the Absorption Theorem. We prove that

X + (X · Y) = X, for all X and Y

The first proof will use a

truth table. Remember that the truth

table will have four rows,

corresponding to the four possible combinations of values for X and Y:

X = 0 and Y = 0, X = 0 and Y = 1, X = 1 and Y = 0, X = 1 and Y = 1.

|

X |

Y |

X · Y |

X + (X · Y) |

|

0 |

0 |

0 |

0 |

|

0 |

1 |

0 |

0 |

|

1 |

0 |

0 |

1 |

|

1 |

1 |

1 |

1 |

Having computed the value of X

+ (X ·

Y) for all

possible combinations of X and Y, we note that in each

case we have X + (X ·

Y) = X. Thus, we conclude that

the theorem is true.

Here is another proof method

much favored by this author. It depends

on the fact that there

are only two possible values of X: X = 0 and X = 1. A theorem involving the variable X is

true if and only if it is true for both X = 0 and X = 1. Consider the above theorem, with the

claim that X + (X ·

Y) = X. We look at the cases X = 0 and X

= 1, computing both the LHS

(Left Hand Side of the Equality) and RHS (Right Hand Side of the Equality).

If X = 0, then X + (X · Y) = 0 + (0 · Y) =

0 + 0 = 0, and LHS = RHS.

If X = 1, then X + (X · Y) =

1 + (1 ·

Y) = 1 + Y = 1, and LHS = RHS.

Consider now a trivial variant of the absorption theorem.

![]() + (

+ (![]() · Y) =

· Y) = ![]() , for all X and Y

, for all X and Y

There are two ways to prove

this variant of the theorem., given that the above

standard

statement of the absorption theorem is true.

An experienced mathematician would claim

that the proof is obvious. We shall make

it obvious.

The absorption theorem, as

proved, is X + (X ·

Y) = X, for all X and Y. We restate the

theorem, substituting the variable W for X, getting W + (W · Y) =

W, for all X and Y.

Now, we let W = ![]() , and the result is indeed obvious.

, and the result is indeed obvious.

|

X |

Y |

|

X

+ ( |

X + Y |

|

0 |

0 |

0 |

0 |

0 |

|

0 |

1 |

1 |

1 |

1 |

|

1 |

0 |

0 |

1 |

1 |

|

1 |

1 |

0 |

1 |

1 |

We close this section with a

proof of the other

standard variant of the absorption theorem,

that X + (![]() · Y) =

X + Y for all X and Y.

· Y) =

X + Y for all X and Y.

The theorem can also be proved using the

two case method.

Yet Another Sample Proof

While this instructor seems to prefer the obscure “two-case”

method of proof, most

students prefer the truth table approach.

We shall use the truth table approach to

prove one of the variants of DeMorgan’s laws: ![]() =

= ![]() +

+ ![]() .

.

|

X |

Y |

X · Y |

|

|

|

|

|

Comment |

|

0 |

0 |

0 |

1 |

|

1 |

1 |

1 |

Same |

|

0 |

1 |

0 |

1 |

|

1 |

0 |

1 |

Same |

|

1 |

0 |

0 |

1 |

|

0 |

1 |

1 |

Same |

|

1 |

1 |

1 |

0 |

|

0 |

0 |

0 |

Same |

In using a truth table to show that two expressions are

equal, one generates a column for each

of the functions and shows that the values of the two functions are the same

for every

possible combination of the input variables.

Here, we have computed the values of both

![]() and (

and (![]() +

+ ![]() ) for all possible combinations of the values of X and

Y and shown that

) for all possible combinations of the values of X and

Y and shown that

for every possible combination the two functions have the same value. Thus they are equal.

Some Basic Forms: Product of Sums and Sum of Products

We conclude our discussion of Boolean algebra by giving a

formal definition to two

notations commonly used to represent Boolean functions:

SOP Sum of Products

POS Product of Sums

We begin by assuming the definition of a variable. A literal is either a variable in its

true

form or its complemented form, examples are A, C’, and D. A product term is the logical

AND of one or more literals; a sum term

is the logical OR of one or more literals.

According to the strict definition the single literal B’ is both a product term

and sum term.

A sum of products (SOP) is the logical OR of one or more product terms.

A product of sums (POS) is the logical AND of one or more sum terms.

Sample SOP: A + B·C A·B + A·C + B·C A·B + A·C + A·B·C

Sample POS: A·(B + C) (A + B)·(A + C)·(B + C) (A + B)·(A + C)·(A + B + C)

The student will note a few oddities in the above

definition. These are present in order

to

avoid special cases in some of the more general theorems. The first oddity is the mention of

the logical AND of one term and the logical OR of one term; each of these

operators is a

binary operator and requires two input variables. What we are saying here is that if we take a

single literal as either a sum term or a product term, our notation is

facilitated. Consider the

expression (A + B + C), which is either a POS or SOP expression depending on

its use. As a

POS expression, it is a single sum term comprising three literals. As an SOP expression, it is

the logical OR of three product terms, each of which comprising a single

literal. For cases

such as these the only rule is to have a consistent interpretation.

We now consider the concept of normal and canonical forms.

These forms apply to both

Sum of Products and Produce of Sums expressions, so we may have quite a variety

of

expression types including the following.

1. Not in any form at

all.

2. Sum

of Products, but not normal.

3. Product

of Sums, but not normal.

4. Normal

Sum of Products.

5. Normal

Product of Sums.

6. Canonical

Sum of Products.

7. Canonical

Product of Sums.

In order to define the concept of a normal form, we must consider the idea of inclusion.

A product term X is included in another product term

Y if every literal that is in X is also in

Y. A sum term X is included in

another sum term Y if every literal that is in X is also in Y.

For inclusion, both terms must be product terms or both must be sum terms. Consider the

SOP formula A·B

+ A·C

+ A·B·C. Note that the first term is included in the

third term as

is the second term. The third term A·B·C

contains the first term A·B, etc.

Consider the POS formula (A + B)·(A + C)·(A +

B + C). Again, the first term (A + B) is

included in the third term (A + B + C), as is the second term.

An extreme form of inclusion is

observed when the expression has identical terms.

Examples of this would be the SOP expression A·B + A·C + A·B and

the POS expression

(A + B)·(A + C)·(A + C). Each of

these has duplicate terms, so that the inclusion is 2-way.

The basic idea is that an expression with included (or duplicate) terms is not

written in the

simplest possible form. The idea of

simplifying such expressions arises from the theorems

of Boolean algebra, specifically the following two.

T1 Idempotency a)

X + X = X, for all X

b)

X ·

X = X, for all X

T3 Absorption a) X + (X · Y) = X, for all X and Y

b) X · (X + Y) = X, for all X and Y

As a direct consequence of these theorems, we can perform the following simplifications.

A·B

+ A·C

+ A·B = A·B + A·C

(A + B)·(A + C)·(A +

C) = (A + B)·(A +

C)

A·B + A·C + A·B·C = A·B + A·C

(A + B)·(A + C)·(A +

B + C) = (A + B)·(A +

C)

We now consider these two formulae with slight alterations:

A·B

+ A·C

+ A’·B·C and

(A + B)·(A

+ C)·(A’

+ B + C). Since the literal must be

included exactly, neither of

formulae in this second set contains included terms.

We now can produce the definitions of normal forms. A formula is in a normal form only

if it contains no included terms; thus, a normal SOP form is a SOP form

with no included

terms and a normal POS form is a POS form with no included terms.

Sample Normal SOP: A + B·C A·B + A·C + B·C A’·B + A·C + B·C’

Sample

We now can define the canonical forms. A normal form over a number of variables is

in

canonical form if every term contains each variable in either the true or

complemented form.

A canonical SOP form is a normal SOP form in which every product term

contains a literal

for every variable. A canonical POS

form is a normal POS form in which every sum term

in which every sum term contains a literal for every variable.

Note that all canonical forms

are also normal forms. Canonical forms

correspond directly to

truth tables and can be considered as one-to-one translations of truth

tables. Here are the

rules for converting truth tables to canonical forms.

To produce the Sum of Products representation from a truth table, follow this rule.

a) Generate a product

term for each row where the value of the function is 1.

b) The

variable is complemented if its value in the row is 0, otherwise it is not.

To produce the Product of Sums

representation from a truth table, follow this rule.

a) Generate

a sum term for each row where the value of the function is 0.

b) The

variable is complemented if its value in the row is 1, otherwise it is not.

As an example of conversion from a truth table to the normal

forms, consider the two

Boolean functions F1 and F2, each of three Boolean variables, denoted A, B, and

C.

Note that a truth table for a three-variable function requires 23 =

8 rows.

|

Row |

A |

B |

C |

F1 |

F2 |

|

0 |

0 |

0 |

0 |

0 |

0 |

|

1 |

0 |

0 |

1 |

1 |

0 |

|

2 |

0 |

1 |

0 |

1 |

0 |

|

3 |

0 |

1 |

1 |

0 |

1 |

|

4 |

1 |

0 |

0 |

1 |

0 |

|

5 |

1 |

0 |

1 |

0 |

1 |

|

6 |

1 |

1 |

0 |

0 |

1 |

|

7 |

1 |

1 |

1 |

1 |

1 |

Recall

that the truth table forms a complete specification of both F1 and F2. We may elect

to represent each of F1 and F2 in either normal or canonical form, but that is

not required.

There

are two ways to represent the canonical forms.

We first present a pair of forms that

this author calls the S–list and P–list. The S–list

is used to represent canonical SOP and the

P–list

is used to represent canonical POS forms.

To generate the S–list,

we just list the rows of the truth table for which the function has a

value of 1. In the truth table, we have

the following.

F1 has value 1 for rows 1, 2, 4, and

7; so F1 = S(1,

2, 4, 7).

F2 has value 1 for rows 3, 5, 6, and

7; so F2 = S(3,

5, 6, 7).

To generate the P–list,

we just list the rows of the truth table for which the function has a

value of 0. In the truth table, we have

the following.

F1 has value 1 for rows 0, 3, 5, and

6; so F1 = P(0,

3, 5, 6).

F2 has value 1 for rows 0, 1, 2, and

4; so F2 = P(0,

1, 2, 4).

Note that conversion directly

between the S–list

and P–list

forms is easy if one knows how

many variables are involved. Here we

have 3 variables, with 8 rows numbered 0 through 7.

Thus, if F1 = S(1,

2, 4, 7), then F1

= P(0,

3, 5, 6) because the numbers 0, 3, 5, and 6 are the

only numbers in the range from 0 to 7 inclusive that are not in the S–list. The conversion

rule works both ways. If F2 = P(0,

1, 2, 4), then F2 = S(3,

5, 6, 7) because the numbers

3, 5, 6, and 7 are the only numbers in the range from 0 to 7 inclusive not in

the P–list.

We now address the generation

of the canonical SOP form of F1 from the truth table. The

rule is to generate a product term for each row for which the function value is

1. Reading

from the top of the truth table, the first row of interest is row 1.

|

A |

B |

C |

F1 |

|

0 |

0 |

1 |

1 |

We have A = 0 for this row, so the corresponding literal in the product term is A’.

We have B = 0 for this row, so the corresponding literal in the product term is B’.

We have C = 1 for this row, so the corresponding literal in the product term is C.

The product term generated for this row in the truth table is A’·B’·C.

We now address the generation

of the canonical POSP form of F1 from the truth table. The

rule is to generate a sum term for each row for which the function value is

0. Reading from

the top of the truth table, the first row of interest is row 1.

|

A |

B |

C |

F1 |

|

0 |

0 |

0 |

0 |

We have A = 0 for this row, so the corresponding literal in the product term is A.

We have B = 0 for this row, so the corresponding literal in the product term is B.

We have C = 1 for this row, so the corresponding literal in the product term is C.

The product term generated for this row in the truth table is A + B + C.

Thus we have the following representation as Sum of Products

F1 = A’·B’·C + A’·B·C’ + A·B’·C’ + A·B·C

F2 = A’·B·C + A·B’·C + A·B·C’ + A·B·C

We have the following representation as Product of Sums

F1 = (A + B + C)·(A + B’ + C’)·(A’ + B + C’)·(A’ + B’ + C)

F2 = (A + B + C)·(A + B + C’)·(A + B’ + C)·(A’ + B + C)

The choice of representations depends on the number of terms. For N Boolean variables

Let CSOP be the number of terms in the Sum of Products representation.

Let CPOS be the number of terms in the Product of Sums representation.

Then CSOP + CPOS = 2N. In the above example CSOP = 4 and

CPOS = 4, so either choice is

equally good. However, if the Sum of

Products representation has 6 terms, then the Product

of Sums representation has only 2 terms, which might recommend it.

Non-Canonical Forms

The basic definition of a canonical form (both SOP and POS)

is that every term contain each

variable, either in the negated or non-negated state. In the example above, note that every

product term in the SOP form contains all three variables A, B, and C in some

form. The

same holds for the POS forms.

It is often the case that a non-canonical form is

simpler. For example, one can easily

show

that F2(A, B, C) = A·B + A·C + B·C. Note that in this simplified form, not all

variables

appear in each of the terms. Thus, this

is a normal SOP form, but not a canonical form.

The simplification can be done by one of three ways:

algebraic methods, Karnaugh Maps

(K-Maps), or the Quine-McCluskey procedure. Here we present a simplification of

F2(A, B, C) by the algebraic method with notes to the appropriate postulates

and theorems.

The idempotency theorem, states

that for any Boolean expression X, we have

X + X = X. Thus X = X + X = X + X + X

and A·B·C = A·B·C + A·B·C + A·B·C, as

A·B·C is

a valid Boolean expression for any values of A, B, and C.

F2

= A’·B·C + A·B’·C + A·B·C’ + A·B·C Original

form

= A’·B·C + A·B’·C + A·B·C’ + A·B·C + A·B·C + A·B·C Idempotence

= A’·B·C + A·B·C + A·B’·C + A·B·C + A·B·C’ + A·B·C Commutativity

= (A’ +A)·B·C + A·(B’ + B)·C + A·B·(C’ + C) Distributivity

= 1·B·C + A·1·C + A·B·1

= B·C + A·C + A·B

= A·B + A·C + B·C Commutativity

The main reason to simplify Boolean expressions is to reduce

the number of logic gates

required to implement the expressions.

This was very important in the early days of

computer engineering when the gates themselves were costly and prone to

failure.

It is less of a concern these days.

More On Conversion Between Truth Tables and Boolean

Expressions

We now give a brief discussion on the methods used by this

author to derive Boolean

expressions from truth tables and truth tables from canonical form Boolean

expressions.

We shall do this be example. Consider

the truth table expression for our function F1.

The truth table for F1 is shown below.

|

Row |

A |

B |

C |

F1 |

|

0 |

0 |

0 |

0 |

0 |

|

1 |

0 |

0 |

1 |

1 |

|

2 |

0 |

1 |

0 |

1 |

|

3 |

0 |

1 |

1 |

0 |

|

4 |

1 |

0 |

0 |

1 |

|

5 |

1 |

0 |

1 |

0 |

|

6 |

1 |

1 |

0 |

0 |

|

7 |

1 |

1 |

1 |

1 |

The rows for which the function F1 has value 1 are shown in

bold font. As noted above, we

can write F1 in the S-list

form as F1 = S(1,

2, 4, 7). To convert this form to

canonical SOP,

we just replace the decimal numbers by binary; thus F1 = S(001,

010, 100, 111). To work

from the truth tables, we just note the values of A, B, and C in the selected

rows.

First write the Boolean expression in a form that cannot be

correct, writing one identical

Boolean product term for each of the four rows for which F1 = 1.

F1(A, B, C) = A·B·C + A·B·C + A·B·C + A·B·C

Then write under each term the 0’s and 1’s for the corresponding row.

F1(A, B, C) = A·B·C + A·B·C + A·B·C + A·B·C

0 0 1

0 1 0 1 0 0 1 1 1

Wherever one sees a 0, complement the corresponding variable, to get.

F1(A, B, C) = A’·B’·C + A’·B·C’ + A·B’·C’ + A·B·C

To produce the truth-table from the canonical form SOP

expression, just write a 0 under

every complemented variable and a 1 under each variable that is not complemented.

F2(A,

B, C) = A’·B·C + A·B’·C + A·B·C’ + A·B·C

0 1 1

1 0 1 1 1 0 1 1 1

This function can be written as F2 = S(011, 101, 110, 111) in

binary or F2 = S(3,

5, 6, 7).

To create the truth table for this, just make 8 rows and fill rows 3, 5, 6, and

7 with 1’s and

the other rows with 0’s.

It is also possible to generate the canonical POS

expressions using a similar procedure.

Consider again the truth table for F1, this time with the rows with 0 values

highlighted.

|

Row |

A |

B |

C |

F1 |

|

0 |

0 |

0 |

0 |

0 |

|

1 |

0 |

0 |

1 |

1 |

|

2 |

0 |

1 |

0 |

1 |

|

3 |

0 |

1 |

1 |

0 |

|

4 |

1 |

0 |

0 |

1 |

|

5 |

1 |

0 |

1 |

0 |

|

6 |

1 |

1 |

0 |

0 |

|

7 |

1 |

1 |

1 |

1 |

The rows for which the function F1 has value 0 are shown in

bold font. As noted above, we

can write F1 in the P-list

form as F1 = P(0,

3, 5, 6). To convert this form to

canonical POS,

we just replace the decimal numbers by binary; thus F1 = P(000,

011, 101, 110). To work

from the truth tables, we just note the values of A, B, and C in the selected

rows.

Again we write a Boolean expression with one sum term for

each of the four rows for

which the function has value 0. At the

start, this is not correct.

F1 = (A + B + C) · (A + B + C) · (A + B + C) · (A + B + C)

Now write the expression with 0’s and 1’s for the corresponding row numbers.

F1 = (A + B + C) · (A + B + C) · (A + B + C) · (A + B + C)

0 0 0

0 1 1

1 0 1

1 1 0

Wherever one sees a 1, complement the corresponding variable, to get.

F1 = (A + B + C)·(A + B’ + C’)·(A’ + B + C’)·(A’ + B’ + C)

To produce the truth-table from the canonical form POS

expression, just write a 1 under

every complemented variable and a 0 under each variable that is not

complemented.

F2 = (A + B + C)·(A + B + C’)·(A + B’ + C)·(A’ + B + C)

0

0 0 0

0 1 0

1 0 1

0 0

This function can be written as F2 = P(000, 001, 010, 100) in

binary or F2 = P(0,

1, 2, 4).

To create the truth table for this, just make 8 rows and fill rows 0, 1, 2, and

4 with 0’s and

the other rows with 1’s.

Implementation of Boolean Logic by

Circuitry

Having worn ourselves out on the algebraic

view of Boolean functions, we now return to the

fact that these functions must be implemented in hardware if a digital computer

is to be built.

This section presents the circuits used to implement basic Boolean functions.

The Boolean values are represented by specific voltages in

the electronic circuitry. As a

result of experience, it has been found desirable only to have two voltage

levels, called High

and Low or H and L. This leads to two

types of logic

Negative Logic High = 0 Low = 1

Positive Logic High = 1 Low = 0

This course will focus on Positive Logic and ignore Negative

Logic. As a matter of fact, we

shall only occasionally concern ourselves with voltage levels. In Positive Logic, +5 volts is

taken as the high level and 0 volts as the low level. These are the ideals. In actual practice,

the standards allow some variation depending on whether the level is output or

input.

Output of Logic Gate Input to Logic Gate

Logic High 2.4 – 5.0 volts 2.0 – 5.0 volts

Logic Low 0.0 – 0.4 volts 0.0 – 0.8 volts

The tighter constraints on output allow for voltage degradation

due to the practicalities of

implementing electrical circuits. One of

these practicalities is called fan-out,

used to denote

the maximum number of gates that can be attached to the output of one specific

gate. This

relates to the maximum amount of current that a given gate can produce. The fan-out

problem is illustrated in everyday life when one sees the lights dim as a new

appliance, such

as an electric furnace, turns on. There

is also an easily-observed hydraulic equivalent to the

limited fan-out problem: wait until someone is in the shower and then flush

every commode

in the house. The water main pipe can

only supply so much cold water so that the pressure

to the shower will drop, often with hilarious consequences.

Basic Gates for Boolean Functions

We now discuss a number of basic logic gates used to

implement Boolean functions. The

gates of interest at this point are AND, OR, NOT, NAND (NOT AND), NOR (NOT OR)

and

XOR (Exclusive OR). The Exclusive OR

gate is the same as the OR gate except that the

output is 0 (False) when both inputs are 1 (True). The symbol for XOR is Å.

The first gate to be discussed is the OR gate. The truth table for a two-input OR gate is

shown below. In general, if any input to

an OR gate is 1, the output is 1.

A B A + B

A B A + B

0 0 0

0 1 1

1 0 1

1 1 1

The next gate to be discussed is the AND gate. The truth table for a two-input AND gate is

shown now. In general, if any input to

an AND gate is 0, the output is 0.

A B A · B

A B A · B

0 0 0

0 1 0

1 0 0

1 1 1

The third of the four basic gates is the single input NOT

gate. Note that there are two ways

of denoting the NOT function. NOT(A) is denoted as either A’ or ![]() . We use

. We use ![]() as often as

as often as

possible to represent NOT(A), but may become lazy and

use the other notation.

A ![]()

0 1

1 0

The last of the gates to be discussed at this first stage is

not strictly a basic gate. We include

it at this level because it is extremely convenient. This is the two-input Exclusive OR (XOR)

gate, the function of which is shown in the following truth table.

A B A

Å

B

A B A

Å

B

0 0 0

0 1 1

1 0 1

1 1 0

We note

immediately an interesting and very useful connection between the XOR function

and the NOT function. For any A, A Å 0 =

A, and A Å

1 =![]() . The proof is

by truth table.

. The proof is

by truth table.

A B A Å B Result

0 0 0 A

1 0 1 A This result is extremely useful when designing

0 1 1 ![]() a

ripple carry adder/subtractor.

a

ripple carry adder/subtractor.

1 1 0 ![]()

The basic logic gates are defined in terms of the binary

Boolean functions. Thus, the basic

logic gates are two-input AND gates, two-input OR gates, NOT gates, two-input

NAND

gates, two-input NOR gates, and two-input XOR gates.

It is common to find three-input and four-input varieties of

AND, OR, NAND, and NOR

gates. The XOR gate is essentially a

two-input gate; three input XOR gates may exist but

they are hard to understand.

Consider a four input AND gate. The output function is described as an easy generalization

of the two input AND gate; the output is 1 (True) if and only if all of the

inputs are 1,

otherwise the output is 0. One can

synthesize a four-input AND gate from three two-input

AND gates or easily convert a four-input AND gate into a two-input AND gate. The student

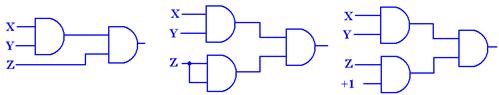

should realize that each figure below represents only one of several good

implementations.

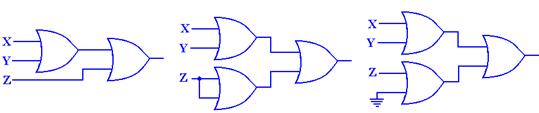

Figure: Three 2-Input AND Gates Make a 4-Input AND Gate

Figure: Another Way to Configure Three 2-Input AND Gates as a 4-Input

AND Gate

Figure:

Here is the general rule for N-Input AND gates and N-Input OR gates.

AND Output is 0 if any input is

0. Output is 1 only if all inputs are 1.

OR Output is 1 if any input is 1. Output is 0 only if all inputs are 0.

XOR For N > 2, N-input XOR gates are not useful and will be avoided.

“Derived Gates”

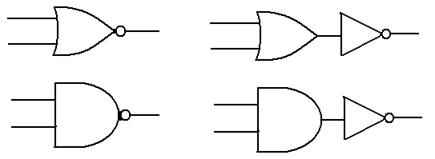

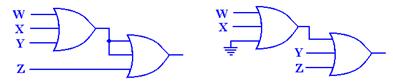

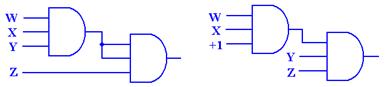

We now show 2 gates that may be considered as derived from the above: NAND and NOR.

The

NOR gate is the

The

NOR gate is the

same as an OR gate

followed by a NOT

gate.

The NAND gate is the

same as an AND gate

followed by a NOT

gate.

Electrical engineers may validly object that these two gates

are not “derived” in the sense

that they are less basic than the gates previously discussed. In fact, NAND gates are often

used to make AND gates. As always, we

are interested in the non-engineering approach and

stay with our view: NOT, AND, and OR are basic.

We shall ignore the XNOR gate and, if

needed, implement its functionality by an XOR gate followed by a NOT gate.

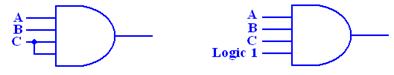

As an exercise in logic, we show that the NAND (Not AND)

gate is fundamental in that it

can be used to synthesize the AND, OR, and NOT gates. We begin with the basic NAND.

The basic truth table for the NAND is given by

X Y X·Y

X Y X·Y ![]()

0 0 0 1

0 1 0 1

1 0 0 1

1 1 1 0

From this truth table, we see that ![]() = 1 and

= 1 and ![]() = 0, so we

conclude that

= 0, so we

conclude that ![]() =

= ![]()

and immediately have the realization of the NAND gate as a NOT gate.

Figure: A NAND Gate Used as a NOT Gate

To synthesize an AND gate from a NAND gate, we just note

that NOT NAND is the same as

NOT NOT AND, which is the same as AND. In other words ![]() = X · Y,

and we follow

= X · Y,

and we follow

the NAND gate with the NOT gate, implemented from another NAND gate.

Here is the AND gate as implemented from two NAND gates.

Figure: Two NAND Gates to Make an AND Gate

In order to implement an OR gate, we must make use of

DeMorgan’s law. As stated above,

DeMorgan’s law is usually given as ![]() =

= ![]() +

+ ![]() . We use

straightforward algebraic

. We use

straightforward algebraic

manipulation to arrive at a variant statement of DeMorgan’s law.

![]() =

= ![]() = X + Y

= X + Y

Using this strange equality, a direct result of DeMorgan’s law, we have the OR circuit.

Figure: Three NAND Gates Used to Make an OR Gate

Circuits and Truth

Tables

We now address an obvious

problem – how to relate circuits to Boolean expressions.

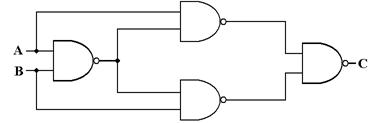

The best way to do this is to work some examples. Here is the first one.

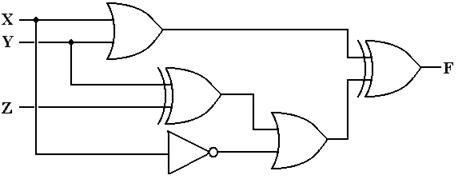

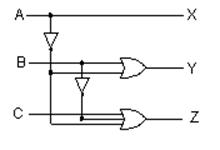

Question:

What are the truth table and the Boolean expressions that describe the following circuit?

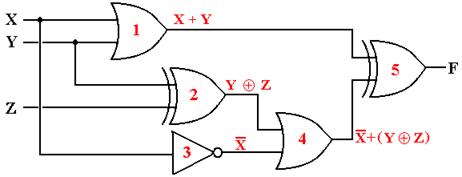

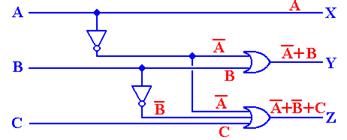

The method to get the answer is

to label each gate and determine the output of each. The

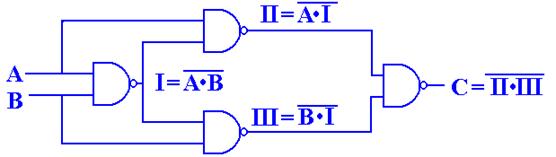

following diagram shows the gates as labeled and the output of each gate.

The outputs of each gate are as follows:

The output of gate 1 is (X +

Y),

The output of gate 2 is (Y Å Z),

The output of gate 3 is X’,

The output of gate 4 is X’ +

(Y Å

Z), and

The output of gate 5 is (X +

Y) Å

[X’ + (Y Å

Z)]

We now produce the truth table for the function.

|

X |

Y |

Z |

X + Y |

(Y Å Z) |

X’ |

X’ + (Y Å

Z) |

(X + Y) Å [X’+(Y Å Z)] |

|

0 |

0 |

0 |

0 |

0 |

1 |

1 |

1 |

|

0 |

0 |

10 |

0 |

1 |

1 |

1 |

1 |

|

0 |

1 |

0 |

1 |

1 |

1 |

1 |

0 |

|

0 |

1 |

1 |

1 |

0 |

1 |

1 |

0 |

|

1 |

0 |

0 |

1 |

0 |

0 |

0 |

1 |

|

1 |

0 |

1 |

1 |

1 |

0 |

1 |

0 |

|

1 |

1 |

0 |

1 |

1 |

0 |

1 |

0 |

|

1 |

1 |

1 |

1 |

0 |

0 |

0 |

1 |

The above is the truth table for the function realized by the figure on the previous page.

Lets give a simpler representation.

|

X |

Y |

Z |

F(X, Y, Z) |

|

0 |

0 |

0 |

1 |

|

0 |

0 |

1 |

1 |

|

0 |

1 |

0 |

0 |

|

0 |

1 |

1 |

0 |

|

1 |

0 |

0 |

1 |

|

1 |

0 |

1 |

0 |

|

1 |

1 |

0 |

0 |

|

1 |

1 |

1 |

1 |

We now produce both the SOP and POS representations of this

function. For the SOP,

we look at the four 1’s of the function; for the POS, we look at the four

zeroes.

SOP

F(X, Y, Z) = X’·Y’·Z’ +

X’·Y’·Z + X·Y’·Z’ +

X·Y·Z

0 0 0 0 0 1 1 0 0 1 1 1

POS

F(X, Y, Z) = (X + Y’ + Z) · (X +

Y’ + Z’) ·

(X’ + Y + Z’) ·

(X’ + Y’ + Z)

0 1 0 0 1 1 1 0 1 1

1 0

To simplify in SOP, we write the function in a slightly more

complex form.

F(X, Y, Z) = X’·Y’·Z’ + X’·Y’·Z +

X’·Y’·Z’ + X·Y’·Z’ +

X·Y·Z

= X’·Y’·(Z’ +

Z) + (X + X’)·Y’·Z’ +

X·Y·Z

= X’·Y’ + Y’·Z’ +

X·Y·Z

To simplify in POS, we again write the function in a

slightly bizarre form.

F(X, Y, Z) = (X + Y’ + Z) · (X + Y’ + Z’) · (X’

+ Y + Z’)

· (X’

+ Y’ + Z) ·

(X + Y’ + Z)

= (X +

Y’) ·

(X’ + Y + Z’)·

(Y’ + Z)

We close this discussion by presenting the canonical SOP and POS using another notation.

We rewrite the truth table for F(X, Y, Z), adding row numbers.

|

|

X |

Y |

Z |

F(X, Y, Z) |

|

0 |

0 |

0 |

0 |

1 |

|

1 |

0 |

0 |

1 |

1 |

|

2 |

0 |

1 |

0 |

0 |

|

3 |

0 |

1 |

1 |

0 |

|

4 |

1 |

0 |

0 |

1 |

|

5 |

1 |

0 |

1 |

0 |

|

6 |

1 |

1 |

0 |

0 |

|

7 |

1 |

1 |

1 |

1 |

Noting the positions of the 1’s and 0’s in the truth table gives us our standard notation.

F(X, Y, Z) = S(0, 1, 4, 7)

F(X, Y, Z) = P(2, 3, 5, 6)

Question:

What is the circuit that corresponds to the following two Boolean functions?

The reader might note that the two are simply different representations of the same function.

![]()

The answer here is just to draw

the circuits. The general rule is

simple.

SOP One OR gate connecting the output of a number of AND gates.

POS One AND gate connecting the output of a number of OR gates.

Here is the circuit for F2(A, B, C). It can be simplified.

Here is the circuit for G2(A, B, C). It can be simplified.

The

Non–Inverting Buffer

We now investigate a number of circuit

elements that do not directly implement Boolean

functions. The first is the

non–inverting buffer, which is denoted by the following symbol.

Logically, a buffer does

nothing. Electrically, the buffer serves

as an amplifier converting a

degraded signal into a more useable form; specifically it does the following.

A

logic 1 (voltage in the range 2.0 – 5.0 volts) will be output as 5.0 volts.

A logic 0 (voltage in the

range 0.0 – 0.8 volts) will be output as 0.0 volts.

While one might consider this as an amplifier, it is better considered as a “voltage adjuster”. We shall see another use of this and similar circuits when we consider MSI (Medium Scale Integrated) circuits in a future chapter.

More “Unusual”

Circuits

Up to this point, we have been mostly considering logic

gates in their ability to implement

the functions of Boolean algebra. We now

turn our attention to a few circuits that depend as

much on the electrical properties of the basic gates as the Boolean functions

they implement.

We begin with a fundamental property of all electronic gates, called “gate delay”.

This property will become significant when we consider flip–flops.

We begin with consideration of a simple NOT gate, with Y = ![]() , as shown below.

, as shown below.

From the viewpoint of Boolean algebra, there is nothing

special about this gate. It

implements the Boolean NOT function; nothing else. From the viewpoint of actual

electronic circuits, we have another issue.

This issue, called “gate delay”, reflects the fact

that the output of a gate does not change instantaneously when the input

changes. The output

always lags the input by an interval of time that is the gate delay. For standard TTL circuits,

such as the 74LS04 that implements the NOT function, the gate delay is about

ten

nanoseconds; the output does not change until 10 nanoseconds after the input

changes.

In the next figure, we postulate

a NOT gate with a gate delay of 10 nanoseconds with an

input pulse of width 20 nanoseconds. Note that the output trails the input by the

gate delay;

in particular we have an interval of 10 nanoseconds (one gate delay) during

which X = 1 and

![]() = 1, and also

an interval of 10 nanoseconds during which X = 0 and

= 1, and also

an interval of 10 nanoseconds during which X = 0 and ![]() = 0. This is not a

= 0. This is not a

fault of the circuit; it is just a well–understood physical reality.

The table just below gives a list of gate delay times for some

popular gates. The source of

this is the web page http://www.cs.uiowa.edu/~jones/logicsim/man/node5.html

|

Number |

Description |

Gate Delay in Nanoseconds |

|

74LS00 |

2–Input NAND |

9.5 |

|

74LS02 |

2–Input NOR |

10.0 |

|

74LS04 |

Inverter (NOT) |

9.5 |

|

74LS08 |

2–Input AND |

9.5 |

|

74LS32 |

2–Input OR |

14.0 |

|

74LS86 |

2–Input XOR |

10.0 |

We can see that there is some variation is the gate delay

times for various basic logic gates,

but that the numbers tend to be about 10.0 nanoseconds. In our discussions that follow we

shall make the assumption that all logic gates display the same delay time,

which we shall

call a “gate delay”. While we should

understand that this time value is about

10 nanoseconds, we shall not rely on its precise value.

There are a number of designs

that call for introducing a fixed delay in signal propagation so

that the signal does not reach its source too soon. This has to do with synchronization issues

seen in asynchronous circuits. We shall

not study these, but just note them.

The most straightforward delay circuit is based on the Boolean identity.

![]()

This simple Boolean identity leads to the delay circuit.

![]()

The delay is shown in the timing diagram below, in which Z lags X by 20 nanoseconds.

The rule for application of gate delays is stated simply below.

The output of a gate is stable one gate

delay after all of its inputs are stable.

We should note that the delay

circuit above is logically equivalent to the non–inverting buffer

discussed just above. The non–inverting

buffer is different in two major ways: its time delay

is less, and it usually serves a different purpose in the design.

It should be clear that the

output of a gate changes one gate delay after any of its inputs

change. To elaborate on this statement,

let us consider an exclusive or (XOR) chip.

The

truth table for an XOR gate is shown in the following table.

|

X |

Y |

X Å Y |

|

0 |

0 |

0 |

|

0 |

1 |

1 |

|

1 |

0 |

1 |

|

1 |

1 |

0 |

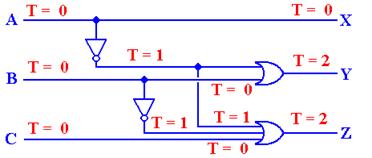

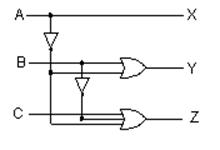

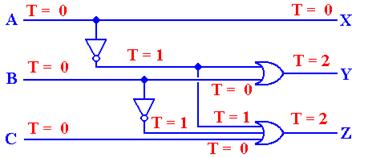

We now examine a timing diagram for

this circuit under the assumption that the inputs are

initially both X = 0 and Y = 0. First X changes

to 1 and some time later so does Y.

Here is the circuit.

Here is the timing diagram.

Note that the output, Z, changes

one gate delay after input X changes and again one gate

delay after input Y changes. This

unusual diagram is shown only to make the point that the

output changes one gate delay after any change of input. In the more common cases, all

inputs to a circuit change at about the same time, so that this issue does not

arise.

We now come to a very important circuit, one that seems to

implement a very simple

Boolean identity. Specifically, we know

that for all Boolean variables X:

![]()

Given the above identity, the output of the following circuit

would be expected to be

identically 0. Such a consideration does

not account for gate delays.

Suppose that input X goes high and stays high for some time,

possibly a number of gate

delays. The output Z is based on the

fact that the output Y lags X by one gate delay.

Suppose we are dealing with the typical value of 10 nanoseconds for a gate delay.

At T = 10, the value of X changes. Neither Y nor Z changes.

At T = 20, the values of each of Y and Z change.

The

value of Y reflects the value of X at T = 10, so Y becomes 0.

The value of Z reflects the

value of both X and Y at T = 10.

At T = 10, we had both X = 1

and Y = 1, so Z becomes 1 at T = 20.

At T = 30, the value of Z changes again to reflect the

values of X and Y at T = 20.

Z becomes 0.

What we have in the above circuit is a design to produce a

very short pulse, of time duration

equal to one gate delay. This facility

will become very useful when we begin to design

edge–triggered flip–flops in a future chapter.

Tri–State Buffers

We have now seen all of the

logic gates to be used in this course.

There is one more gate

type that needs to be examined – the tri-state

buffer. We begin with the

examination of a

simple (non-inverting buffer) and comment on its function. We discuss two basic types:

enabled–high and enabled–low. The

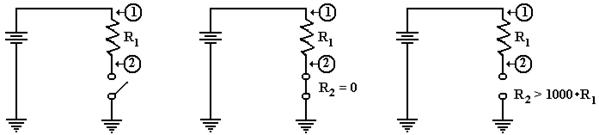

circuit diagrams for these are shown below.

The difference between these two circuits relates to how the

circuits are enabled. Note that

the enabled-low tri–state buffer shows the standard use of the NOT dot on

input.

The following figure shows two

ways of implementing an Enabled–Low Tri-State buffer,

one using an Enabled-High Tri-State with a NOT gate on the Enable line. The significance

of the overbar on the Enable input is that the gate

is active when the control is logic 0.

Figure: Two Views of an Enabled-Low Tri-State Buffer

A tri-state buffer acts as a

simple buffer when it is enabled; it passes the input through while

adjusting its voltage to be closer to the standard. When the tri-state buffer is not enabled, it

acts as a break in the circuit or an open switch (if you understand the

terminology). A gate

may be enabled high (C = 1) or enabled low (C = 0). Consider an enabled–high tri-state.

Figure: Circuits Equivalent to an Enabled

Tri–State and a Disabled Tri-State

When the enable signal is C = 1,

the tri-state acts as a simple buffer and asserts its input as an

output. When the enable signal is C = 0,

the tri-state does not assert anything on the output.

Another way to describe a tri–state buffer is to say that it

is in a high-impedance state (often

called a “high–Z state” by engineers

who use the symbol “Z” for impedance) when it is not

enabled. This is simply to say that it

offers such a resistance to transmission of its input that

for all practical purposed it does not assert anything on its output.

The

The definition of this “third

state” in a tri–state buffer is both obvious and subtle. Compare

the two circuits in the figure below.

One is a buffer; the other is a tri–state buffer.

For the circuit on the left, either F = 0 (0 volts) or F = 1

(5 volts). There is no other option.

For the circuit on the right, when C = 1 then F = A, and takes a value of

either 0 volts or 5

volts, depending on the value of the input.

When C = 0, F is simply not defined.

One of the better ways to understand the tri-state buffer is

to consider the following circuit

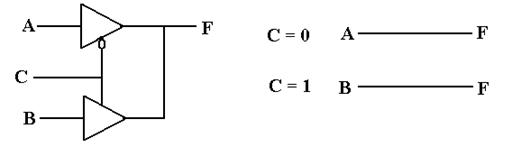

with two Boolean inputs A and B, one output F, and an enable signal C.

Note that the two tri–state

buffers are enabled differently, so that the top buffer is enabled if

and only if the bottom buffer is not enabled, and vice versa. The design insures that at no

time are both of the tri–state buffers enabled, so that there is no conflict of

voltages.

C

= 0 Only the top buffer is

enabled F = A

C = 1 Only the bottom buffer is enabled F = B

The reader will note that the above circuit is logically equivalent to the one that follows.

Given only this simple example,

one might reasonably question the utility of tri–state buffers.

It appears that they offer a novel and complex solution to a simple problem. The real use of

these buffers lies in placing additional devices on a common bus, a situation

in which the use

of larger OR gates might prove difficult.

Before addressing the standard uses of the tri–state buffer,

we must review some of the basics

of direct current electricity: voltage, current, and resistance.

Review of Basic

Electronics

A traditional presentation of

this material might have begun with a review of the basic

concepts of direct current electricity.

Your author has elected to postpone that discussion

until this point in the course, at which time it is needed.

A Basic Circuit

We begin our discussion with a

simple example circuit – a flashlight (or “electric torch” as

the Brits call it). This has three basic

components: a battery, a switch, and a light bulb. For

our purpose, the flashlight has two possible states: on and off. Here are two diagrams.

Light

is Off Light

is On

In the both figures, we see a

light bulb connected to a battery via two wires and a switch.

When the switch is open, it does not allow electricity to pass and the light is

not illuminated.

When the switch is closed, the electronic circuit is completed and the light is

illuminated.

The figure above uses a few of the following basic circuit elements.

We now

describe each of these elements and then return to our flashlight example. The first

thing we should do is be purists and note the difference between a cell and a

battery, although

the distinction is quite irrelevant to this course. A cell is what one buys in the stores today

and calls a battery; these come in various sizes, including AA, AAA, C, and

D. Each of

these cells is rated at 1.5 volts, due to a common technical basis for their

manufacture.

Strictly speaking, a battery is a collection of cells, so that a typical

flashlight contains one

battery that comprises two cells; usually AA, C, or D. An automobile battery is truly a

battery, being built from a number of lead-acid cells.

A light is a device that

converts electronic current into visible light.

This is not a surprise.

A switch is a mechanical device that is either open (not allowing transmission

of current) or

closed (allowing the circuit to be completed).

Note that it is the opposite of a door, which

allows one to pass only when open.

The Idea of Ground

Consider

the above circuit, which suggests a two-wire design: one wire from the battery

to

the switch and then to the light bulb, and another wire from the bulb directly

to the battery.

One should note that the circuit does not require two physical wires, only two

distinct paths

for conducting electricity. Consider the

following possibility, in which the flashlight has a

metallic case that also conducts electricity.

Physical

Connection Equivalent

Circuit

Consider the circuit at left, which shows

the physical connection postulated. When

the

switch is open, no current flows. When

the switch is closed, current flows from the battery

through the switch and light bulb, to the metallic case of the flashlight,

which serves as a

return conduit to the battery. Even if

the metallic case is not a very good conductor, there

is much more of it and it will complete the circuit with no problem.

In

electrical terms, the case of the battery is considered as a common ground, so that the

equivalent circuit is shown at right.

Note the new symbol in this circuit – this is the ground

element. One can consider all ground

elements to be connected by a wire, thus completing

the circuit. In early days of radio, the

ground was the metallic case of the radio – an

excellent conductor of electricity.

Modern automobiles use the metallic body of the car itself

as the ground. Although iron and steel

are not excellent conductors of electricity, the sheer

size of the car body allows for the electricity to flow easily.

To conclude, the circuit at

left will be our representation of a

flashlight. The battery provides the

electricity, which flows through

the switch when the switch is closed, then through the light bulb, and

finally to the ground through which it returns to the battery.

As a convention, all switches

in diagrams will be shown in the open

position unless there is a good reason not to.

The student should regard the

above diagram as showing a switch which is not necessarily